As a product manager, you should be taking user feedback seriously.

That means ensuring you have the systems in place to respond accordingly to any kind of feedback, whether to push a customer experience quick-fix or long-term product development solution.

The first piece of the puzzle is gathering feedback effectively. There are a number of things you could try:

Self-serve feedback forms (this can work as a low-effort passive channel, but won’t give you much context-specific information);

Engaging with your customers first-hand by calling and emailing them to request feedback (this is effective for gathering tailored feedback, but can be very time consuming);

Running in-product microsurveys (great for gathering highly contextual information, and can be delivered at crucial milestones where you think you need to focus on feedback for improving user flows and experience)

Once you actually have that feedback, you have another challenge - what’s the most impactful way to process it?

In other words, what do you actually do with user feedback, and how do you make it actionable?

Even if you’re gathering great feedback, without proper consideration for processing that feedback, you may find that it’s not all that useful.

That’s what we’re focusing on in this article, as well as some best practices for building user surveys that make sure you don’t slip out of touch with your users.

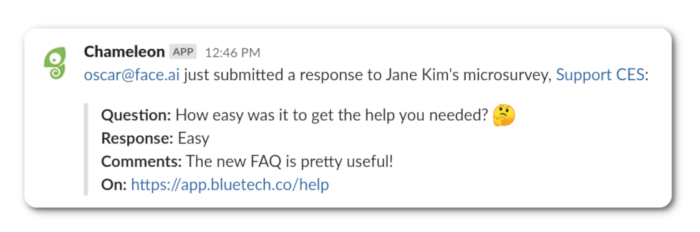

User feedback, straight to your Slack

If you’re like most teams, you probably live in Slack - did you know you can automatically incorporate user feedback directly into your Slack channels, so your team can get the most relevant user inputs ASAP?

This will help your wider teams stay on the pulse and respond to feedback when it matters most. Check out how to do this with Chameleon. Here’s what the integration might look like in practice:

If you’re adventurous, you can set up additional integrations, e.g. send feedback into additional software like productboard or EnjoyHQ - productboard is a product management tool that gives you the ability to collect and organize user feedback, and EnjoyHQ is a user research platform in a similar vein.

Some additional examples of how you could use this integration (not just limited to Slack):

Send survey responses to your CRM of choice (e.g. HubSpot or Salesforce)

Send a follow-up email to thank users for their feedback (e.g. Customer.io)

If you want a full breakdown of how the integration works, check out our help center guide for setting up Slack integration with Chameleon.

Processing feedback

Once you’ve put the systems in place to gather high-quality feedback, you need to follow up. The first step here is deciding what your channels for follow-up will be. In other words, how will you actually follow up?

Based on the nature of the feedback, you may choose to trigger an email (e.g. if users respond with a negative NPS score) or you may simply want to thank them for providing feedback in order to avoid feedback fatigue.

In any case, you should be categorizing feedback to facilitate streamlined responses, whatever channels you’re using.

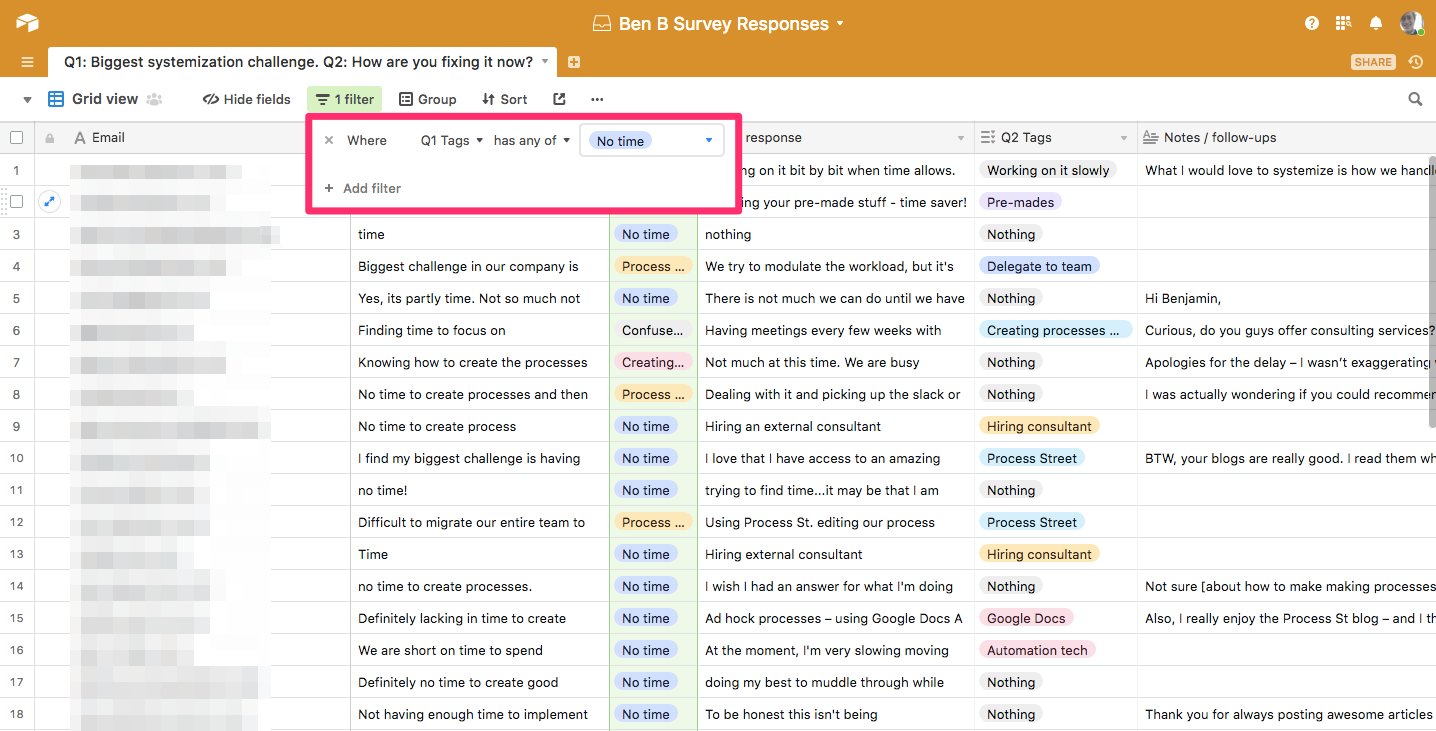

The importance of tagging and categorizing feedback

Ensure that feedback is properly tagged and categorized to make it easier to target user profiles with follow-up emails or in-product messages.

How to Process Survey Feedback Properly

An example of tagging user feedback by pain point: no time, confused about feature X, etc.

An example of tagging user feedback by pain point: no time, confused about feature X, etc.

In turn, categorizing feedback will also help you prioritize.

Prioritizing feedback

How do you know what feedback to prioritize? Product teams need ways to filter and organize feedback based on urgency and perceived value.

One technique is to discuss feedback during regular standup or all-hands meetings, to reach a consensus on what feedback is a top priority. In this case, it makes more sense to discuss the type of feedback to be prioritized, which can be further defined and clarified, as opposed to case-by-case firefighting.

Another technique is to taxonomize the different types of user feedback and give each type weighted importance that can be cross-referenced with features or product areas based on their perceived user impact or importance.

This kind of approach allows you to adopt policies like not shipping a product update or new feature unless all feedback above a certain threshold of importance has been resolved.

General feedback vs active feedback

We can understand user feedback as either:

General (based on always-on feedback channels, e.g. support tickets or certain types of in-product feedback collection);

Active (that you are soliciting to answer a question or help with some decision-making).

It goes without saying that these different kinds of feedback will need to be processed differently.

General feedback is largely useful for sustaining a base level of customer satisfaction, and on top of that allows you to uncover product insight that you may not have considered (unknown problems).

Active feedback is trying to solve a known problem or address a specific issue by designing questions or prompts for feedback around that problem.

Survey design: how to compel users to give feedback

Let's look now at some ways you can ensure surveys Improving your product's overall user feedback approach means understanding some best practices for how to survey users.

Briefly, here are the best practices for survey design that I’ll expand upon in subsequent sections of their own:

Ask specific questions (e.g. NPS on a specific feature, or CES for a specific workflow, as opposed to an overall NPS or CES) to make it easier to categorize and process responses.

Leverage user analytics and tracking to inform how you design, deliver and process surveys (e.g. personalized survey questions).

Keep surveys shorter to increase the likelihood of getting a response. Ideally, you can deploy one-question microsurveys to maximize this principle.

Deliver surveys in-product to capture feedback that is in-flow and maximally relevant (as opposed to sending an email, which might be seen and completed hours after the user last even thought about your product).

Ask specific questions

There’s always a temptation when designing surveys to ask questions just for the sake of asking them, but more questions mean more noise, and ultimately more effort for the user. The questions in your microsurveys should always serve a purpose and link back to the key value proposition of your product.

This means focusing on a specific feature or area of your product that you want to gather feedback around. Doing this helps you to gather more relevant, actionable information (as opposed to asking general questions where users might lack the necessary context to give useful feedback).

Context is crucial here, just like personalizing questions. That’s why it’s important to have good user analytics, to inform survey design and delivery.

When your product team builds a user survey with a service like SurveyMonkey or Google Forms, it's tempting to add a big list of questions that are broad in scope and cover a range of different product features (after all, how many chances per week/quarter/year do you get to hold a user's attention for the length of a full survey?).

A comprehensive survey might work for you, but ultimately creates additional load when it comes to processing that feedback because you’ll need to sort the various data points into meaningful categories, not least because a) you can’t predict the exact context the user was in when answering the survey, and b) the lack of context makes the user's insight weaker.

How can you be sure that the user even remembers what feature you’re talking about, or will have the context required to provide meaningful feedback?

To get better feedback, you might be able to segment users into categories like new and experienced before delivering the survey, or break questions down and send smaller surveys - but if you're delivering surveys over email, you’re still left with the problem of lost context.

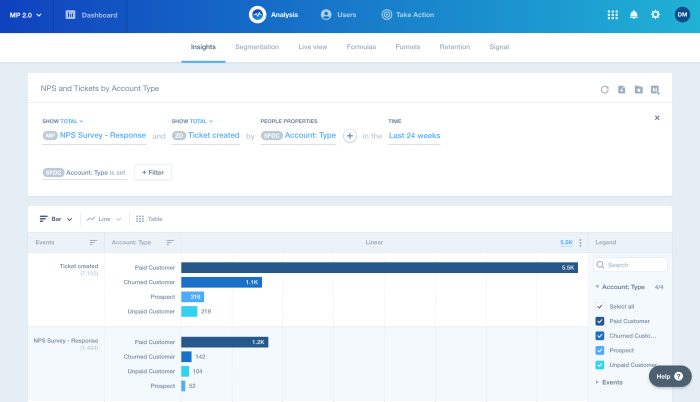

Leverage user analytics and tracking

This is a foundational aspect of making sure you can always deliver contextually relevant surveys. How can you deliver effective surveys if you don’t understand who the surveys are supposed to be targeting?

User segmentation is a useful concept to understand before you start writing your surveys. It begins with a clear understanding of your product’s value proposition, and how that projects onto the needs of your users.

How to Process Survey Feedback Properly

An example of correlating user segments with key events in Mixpanel

There are a bunch of analytics tools product managers can use to monitor user trends and see how they are engaging with your product, all of which can be seamlessly integrated into existing work systems. With proper analytics tooling, product management teams can make data-driven decisions in all aspects of product development and improvement.

Our article exploring product analytics stack options is a great overview of the best solutions available right now, whether you’re looking to get started from scratch or just curious about supplementary tools to add to your existing analytics toolkit.

User analytics can help you understand how to communicate better, with consideration to how your product is in alignment with your users:

Why is your product valuable to your users?

What kind of product success does it enable them to achieve?

How do specific features in your product help your users achieve their specific goals and objectives?

If you can confidently answer these questions, you are ready to create compelling surveys that speak to the goals and objectives of your users to compel them to provide feedback.

Keep surveys shorter

This one’s pretty simple; it works on the principle of reducing as much friction as possible to increase the likelihood of users actually completing your surveys.

If a user sees a survey prompt telling them it’ll take “less than a minute”, they’re far more likely to respond compared to a survey without any indication of how long it will take them. Remember, users don’t owe you feedback - you have to compel them to hand it over.

Microsurveys are great for this because they’re designed to be short (typically a single question). This brings us to the next point...

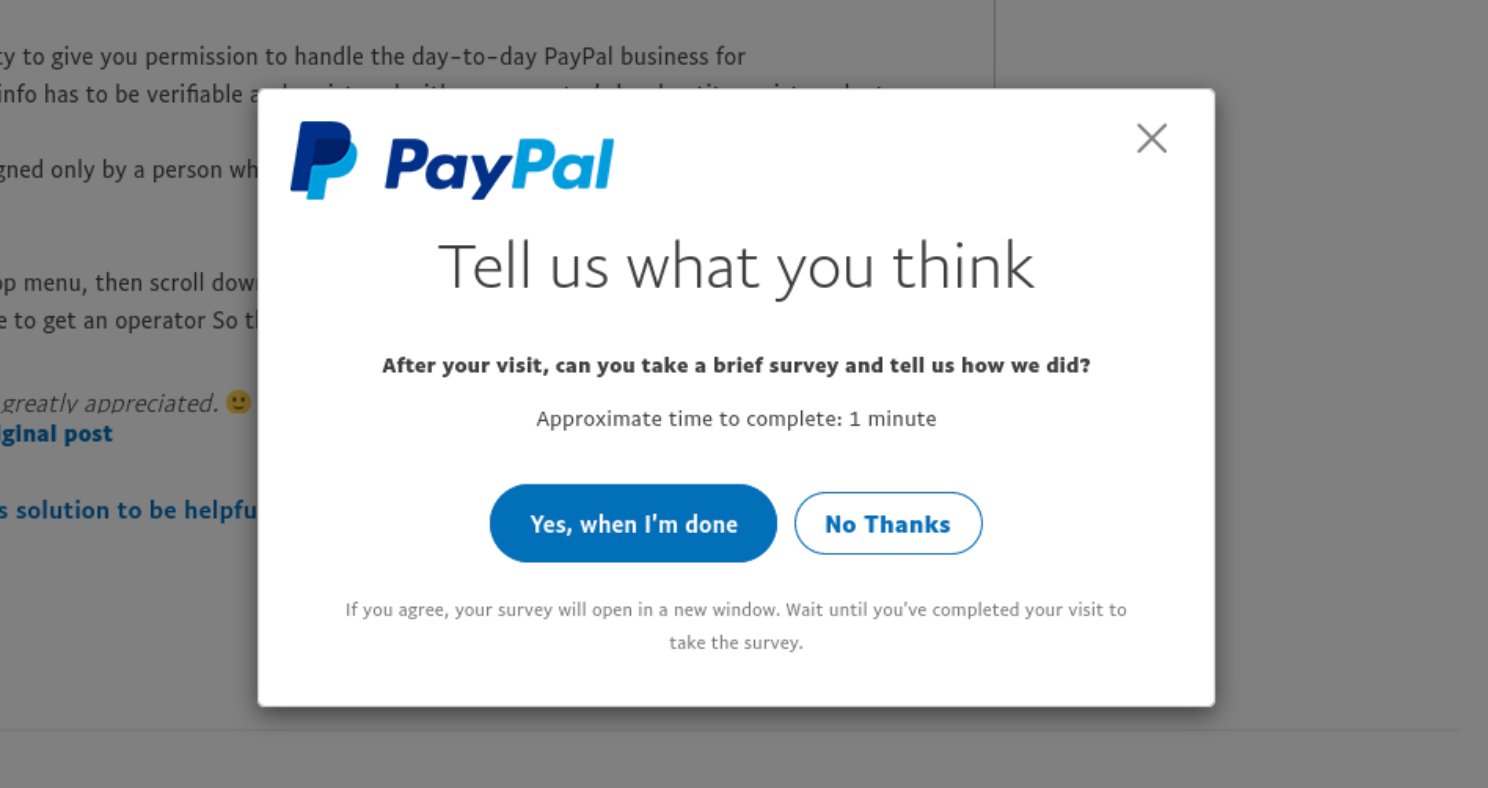

Deliver surveys in-product

How to Process Survey Feedback Properly

PayPal show this microsurvey at the relevant moment - when a user has just interacted with support content.

PayPal show this microsurvey at the relevant moment - when a user has just interacted with support content.

Microsurveys are essentially the culmination of all of the above points. They allow you to deliver direct and concise questions(brief, specific questions), to specific users (based on in-product analytics), at exactly the right time (in-flow context).

Designing microsurveys places consideration into the user’s journey through your product. What specific in-product actions should trigger a microsurvey to appear? What kind of behavior might indicate the user is ready to provide valuable feedback? All of this can be tailored to specific features.

TIP: A common mistake product managers make when delivering microsurveys is that questions get asked too early. If the microsurvey appears immediately after users make the decision to start using a feature, how are they going to provide useful feedback? Give your users enough time to familiarize themselves with a feature before triggering microsurveys with specific in-product actions.

Why Microsurveys are better than traditional user surveys ⚡

Why are Microsurveys preferable to traditional user surveys? Well, when you consider how common survey abandonment is, you begin to understand the importance of balancing the number and depth of responses to gather sufficient data. Best practices around user surveys today suggest that the shorter the surveys, the more likely they are to be completed.

At Chameleon, we believe that Microsurveys are the new standard for delivering in-product surveys. That’s a single, clearly articulated question with space for comments and feedback.

”Microsurveys allow users to maintain cognitive flow and enable feedback without friction.”

We want to enable teams to have a complete overview of the customer. One of the best ways to build that picture is to use Microsurveys to gather immediate, highly actionable feedback from your users.

Run product surveys with Chameleon

In-app Microsurveys allow you to capture important user feedback when it's most relevant